Is the rate of production of useful ideas really dependent on the number of people involved?

Paul Romer at the Nobel Memorial Prize Ceremony

By Bengt Nyman from Vaxholm, Sweden - EM1B6039, CC BY 2.0, https://commons.wikimedia.org/w/index.php?curid=74934767

Conversations with Tyler is one of my favorite long reads at the moment. This recent talk with economist Paul Romer [recent winner of the Nobel Memorial Prize in Economics] overlaps nicely with many of my current obsessions [including English orthography!]. Today, let’s look at the rate of production of useful ideas. Romer brings up a paper by Bloom, Jones, Van Reenen, and Webb “Are Ideas Getting Harder to Find?“.

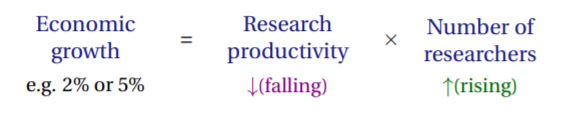

The model used here is a pretty simple one:

Here is what Romer says on the subject:

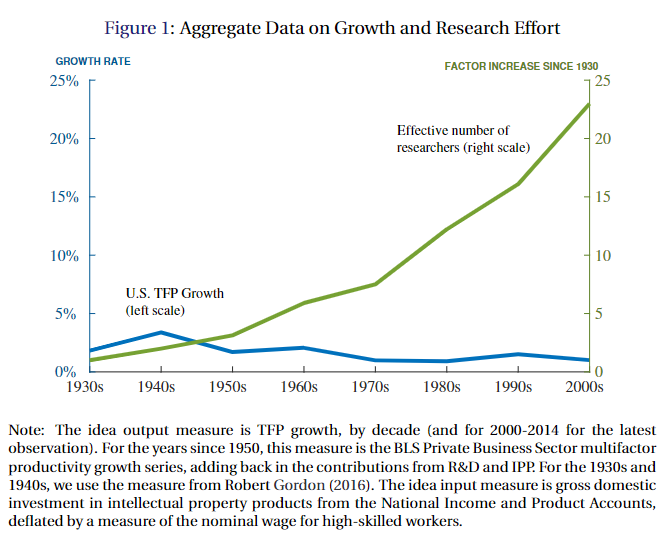

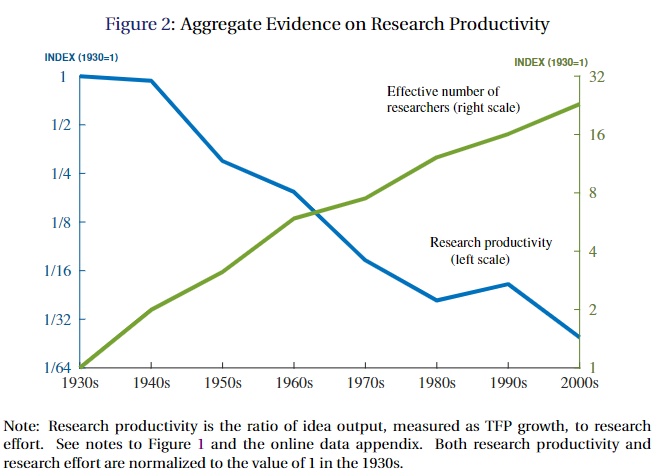

Chad Jones has really been leading the push, saying that to understand the broad sweep of history, you’ve got to have something which is offsetting the substantial increase in the number of people who are going into the R&D-type business or the discovery business. And that could take the form either of a short-run kind of adjustment cost effect, so that it’s hard to increase the rate of growth of ideas. Or it could be, the more things you’ve discovered, the harder it is to find other ones, the fishing-out effect.

I’ve had some thoughts myself about whether it really is harder to find new ideas, but I wonder whether the model posited is really telling us anything interesting. The equation has the form of a rate [research productivity per researcher] multiplied by the number of researchers. But per the notes in the paper, research productivity is defined as TFP growth divided by research effort, which is proxied by the number of researchers scaled by wages. This just cancels out the number of researchers, and gives us something like growth equals research productivity with an average wage fudge factor.

Number of researchers = people who work in IP generation

These things are anti-correlated, given the way they are described.

What it looks like to me is the rate of intellectual discovery is flat to slightly declining [when defined as equal to TFP growth], and that the number of people involved is completely irrelevant once you reach some relatively small threshold. I think the growth model referenced above is mostly useless for what it purports to be about.

That is a pretty bold statement, but I stand by it. My prediction is that you would get about the same rate of growth if you took most of the people doing “research” and had them do something else. On balance, they contribute nothing. [Or in my darker moments, I suspect a net negative contribution is possible….] When I think about this, I’ve made a number of simplifying assumptions, so let’s look at those.

#1: Innovation and scientific discovery are almost wholly the product of a few brilliant minds

This growth model matches up with other kinds of growth models in economics. When you are talking about how fast you make stuff, it is pretty plausible to think that adding more people will increase the overall rate of making stuff, even if you account for differences in ability. This is because given a method of production, there really isn’t much absolute difference in ability to make stuff. You can probably useful model such things as a random normal, which works well in general linear models.

For intellectual activity, this doesn’t seem to be the case. The distribution of accomplishment is nothing like ability. In practice, a tiny fraction of scientists produce the vast majority of results, with a distribution that looks something like a power law distribution. This isn’t a particularly obscure result, but it doesn’t seem to enter into the model.

How Jones et al. attempt to compensate for different levels of ability

What was done instead was an attempt to account for variations in productivity by looking at average wages. But again, this has the wrong form. Wages don’t vary as much as productivity does, or in the same way.

#2: The roles of the most innovative researchers are already filled by the most productive people

I think this one is arguable, but close enough, especially for the kind of outsize talents that really drive Solovian growth. Especially in a meritocratic age, the vast majority of bright, talented people already get a chance. To a first approximation, the most talented people are already doing what they are good at, so if you add more people, you are going to be adding researchers with a small probability of adding anything of huge impact. This is true even if you find smart people to do more research, given assumption #1.

Counterarguments

I can think of plausible arguments that should count against my argument above. I’ve made some of them before.

#C1: We aren’t talking about science, but engineering

The data behind the Lotka curve and other similar metrics mostly looks at unusual accomplishments, like publishing a lot of papers or winning big prizes. However, the data Jones et al. are looking are are mostly about total factor productivity growth, which is pretty clearly applied science, or what most of us call technology and engineering. This of its very nature is more diffuse, and needs a broader range of talents to actualize than a seminal paper does.

#C2: The historical rates of accomplishment in technology growth probably should be discounted because important things were left out

It is easy to build great things fast if you don’t need to worry about fracture analysis or environmental impacts. I’m sometimes horrified by the huge costs borne by the public during the Industrial Revolution, but I don’t face the choices they did either. Modern engineering is more labor intensive than it used to be because we have to integrate a much more comprehensive body of knowledge. And consequently, accidents of all types, environmental pollution, and infrastructure disasters are all less common than they used to be [with a huge caveat for China].

I take this as a justification for including all of the extra people who get paid to generate intellectual property. To be fair, not everyone involved in STEM work in the US falls into this bucket, and depending on how broadly IP is defined, it could also include a lot of non-STEM workers too.

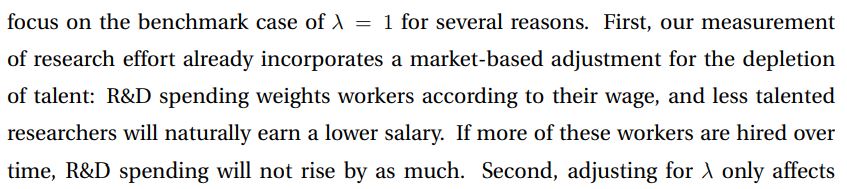

#C3: Sectors like Pharmaceuticals seem to show this pattern of declining efficiency

Eroom’s Law

On balance, I still think it is a little off-base to inflate the number of effective researchers so heavily over the last 90 years. When you take everything into account, I think even in technology, real advances are more Lotka curve like, but you also need a lot more people to get things done, but not 20 or 30 times as many, which is what Figure 1 from the Jones paper implies.

Pharma does look bad, but if you look at something like how much better imaging is, which heavily leverages Moore’s Law, medicine as a whole has developed quite a lot of new technology. What you get for it is another story, of course.

Comments ()