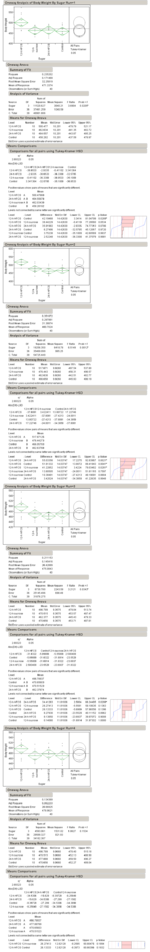

Corn Syrup and Rats

Steve Kamb at Nerdfitness posted a link on Facebook about a study that supposedly proves that high-fructose corn syrup makes you fat. I've always been pretty dubious about the bad rap that HFCS gets, and Archer-Daniels-Midland didn't even pay to have me say that. I'